Part 1: So there’s this thing called artificial intelligence (A.I.)

A.I. is working in small ways on devices you’re probably using every day. It’s becoming more popular in both helpful and dangerous ways. We want you to feel equipped to tell illuminating stories about how A.I. is shaping your world, but first, let’s break down what A.I. is by exploring the forms it takes in our daily lives.

CONSIDER: What do you think about when you hear the words “artificial intelligence”? In what ways do you interact with A.I. daily?

Simply put, artificial intelligence is the ability of machines (computers, phones, cars, robots, etc.) to think and perform tasks like humans. A.I. has made its way into several of the machines we use every day.

Part 2: So there’s A.I. and then there’s machine learning (M.L.)

Machine learning is a subset of A.I. in the same way that A.I. is a subset of computer science. Basically it’s the capability of a machine to learn how to perform certain tasks on its own, typically through trial-and-error.

You might not think of yourself as a teacher, but machine learning gives you an opportunity to school some technology. You’re teaching machines whenever you search for something online, listen to a song on Spotify or play any of Google’s A.I. games. (Our favorites are quickdraw.withgoogle.com and autodraw.com).

ACTIVITY: What has your machine learned about you? (5-10 minutes)

Pull out your phone and open your Instagram app. (If you’re not on Instagram, find a friend who is.) Go to the “Explore” tab (it looks like a little magnifying glass). Identify at least 5 themes in the images and accounts that Instagram wants you to explore.

After doing the activity, consider:

- Why do you think Instagram is showing you these particular images?

- What has Instagram learned about you?

THE DARK SIDE: When you train machines, you’re providing data, and we’d be doing you a huge disservice if we didn’t have you think critically about where the data that you provide might go. If you don’t read the fine print in the terms of service agreements that pop up in most of the apps and devices you use, your data can end up anywhere (and we mean anywhere). While liking a picture of your friend’s new pup may seem like a harmless act or creating that online dating profile feels like the most logical next step in getting over your ex, these seemingly small actions could result in your data being sold to undisclosed “third parties.” Training machines with your data often results in violations of security and privacy. Targeted ads are one thing, leaks of personal information another.

Part 3: There’s A.I. and M.L. and then there’s natural language processing (N.L.P.)

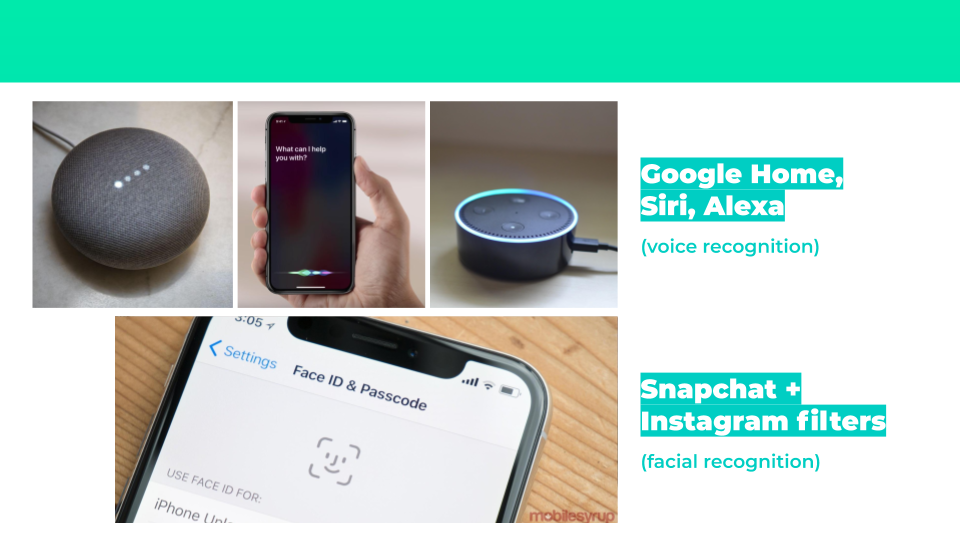

“Hey Siri, what is natural language processing?” N.L.P. includes voice recognition such as Google Home, Siri and Alexa.

ACTIVITY: Exploring N.L.P. with Eliza and Simsensei (20 minutes)

Eliza, created by the Massachusetts Institute of Technology (MIT) in 1966, was considered to be the first N.L.P. computer program that you could talk to. It was a chatbot fashioned after Rogerian psychotherapy. The experiment yielded interesting results. Follow this link to see what Eliza is all about.

After interacting with Eliza, consider:

- What did you notice about the way that Eliza chats?

- What do you think Eliza’s creators were thinking when they programmed Eliza to chat in this specific way?

- If you were to create your own chatbot, what would you make sure to include/consider?

- Do you think that you could have a stimulating conversation with Eliza for 5 minutes? How about 20 minutes? Why or why not?

Almost 50 years later, USC created SimSensei, a more sophisticated version of a chatbot that uses a series of visual signals to react and respond to human behavior. It was initially created to provide healthcare support. Watch this video on SimSensei to see how it works.

After watching the SimSensei video, consider:

- What did you notice about the way that SimSensei chats?

- What are the different types of information that SimSensei is using when a person sits down to chat with her?

- Can you think of any consequences, either good or bad, that might come from using SimSensei?

- Do you think that you could have a stimulating conversation with SimSensei for 5 minutes? How about 20 minutes? Why or why not?

THE DARK SIDE: “Okay Google, N.L.P seems to make life a little easier, but what’s the catch?” Natural language processing is just an offshoot of machine learning, so a lot of the same dangers apply: as a consumer of these products you are electing to help train and refine these technologies. The twist is that these products are microphones connected to the internet, so a whole host of other potential insecurities arise.

Even though most these devices have to be “awakened” with a word or phrase, a lot of people are reasonably concerned about “passive listening.” There have even been instances of accidentally activating these devices via crosstalk or noise from the television, but the possibility of casual conversations from your home being leaked is an even more serious breach.

THE DARKER SIDE: Something that is a little creepier, is that the more we chat with our smart sound devices, the more they develop and become more like humans. So next time you wonder if Siri is giving you a little attitude, recognize that she absolutely is, and her sense of personality and companionability is the result of millions of interactions with the people who use her. Also, machines don’t only learn our good traits. They also learn racism, sexism and other forms of hatred from the data that trains them — i.e., human users.

ACTIVITY: Auto-Complete Poem (20 minutes)

Natural language processing also comes into play when we’re given suggestions, either words or entire sentences, for how to write our emails and texts.

Using your phone, open up your notes app. Start a new note and type one of the following: “The…”, “I…”, “We…”, or “They…”. Do not type anything else yourself, but rather use the middle auto-complete suggested words to complete writing a “poem.” Make it as long or short as you want. There just needs to be enough content to create a poem. Edit as necessary, deleting repeat words or phrases and creating line breaks.

Do this again in a new text message to your best friend and again to your parent or someone else in your family.

After completing the activity, consider:

- How do these three poems vary?

- Why do you think they suggest different words even though you used the same beginning word?

THE DARK SIDE: N.L.P. in our text or email inboxes doesn’t seem so nefarious. What’s bad about the ability to take care of a pile of work emails faster than if we didn’t have the help of auto-complete? At this point, you already know that we’re constantly agreeing to teach machines. However, when you’re using N.L.P. to streamline your communication, there’s an added layer of complicity, because you’re accepting a suggestion provided to you by a machine. Your original intention may have been to send a slightly different message, maybe with a different sentence structure or adverb, but for the sake of efficiency you decided to go with the suggestion. After a while what is usually suggested to you might become what you tend to say. In that way, N.L.P. curbs our behavior. It’s as if we’re being trained too. This erosion of self makes us less unique and more like machines as machines are becoming more like us.

Now that you know a little more about A.I., consider:

- In what ways do you interact with A.I. daily?

- What do we gain by having A.I. in our daily lives?

- What do we lose by having A.I. in our daily lives?

Note: This resource was developed with support from The National Science Foundation and other funders.